- indexing text files local to the server that Solr is running on using the update handler

- indexing data using an app using SolrJ that is running on the same server as Solr

- indexing data using an app using SolrJ that is on a different machine on the same network that the Solr server is on

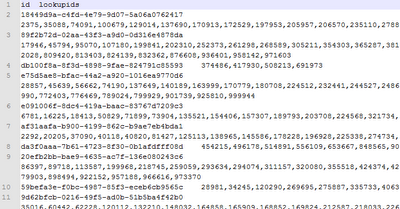

Here is an example of the output data:

The data is stored in a character separated value file with just two columns. The first line of the data file lists the fields that the data will be indexed into, and the fields names are separated using the same separator that is used to divide the columns of data. The first column is mapped to the schema's id field, and the second column is mapped to the schema's lookupids field.

I modified the schema.xml to add a field named "lookupids", set the type = "int", and set multivalued = "true".

I copied a file named testdata.txt to the exampledocs directory, and then imported the data using this URL:

http://localhost:8983/solr/update/csv?commit=true&separator=%09&f.lookupids.split=true&f.lookupids.separator=%2C&stream.file=exampledocs/testdata.txt

I found the information on what to use in the URL here: http://wiki.apache.org/solr/UpdateCSV

The parameters:

- commit - The commit parameter being set to true will tell Solr to commit the changes after all the records in the request have been indexed.

- separator - The separator is set to be a TAB character (%09 refers to ASCII value for the TAB character).

- f.lookupids.split - The "f" is shorthand for field, and the field that is referenced is the "lookupids" field. This parameter tells Solr to split the specified field into mutliple values.

- f.lookupids.separator - The f.lookupids.separator parameter tells Solr to split the lookupids using the comma.

- stream.file - The stream.file tells Solr to stream the file contents from the local file found at "exampledocs/testdata.txt".

<response>

<lst name="responseHeader">

</lst>

<int name="status">

0

</int>

<int name="QTime">

17033

</int>Next I'll try indexing the same file using SolrJ on the same machine that is running Solr.

No comments:

Post a Comment